LLM Sandbox — 为 AI 代码执行提供隔离安全环境

benevolence · 2026-03-31 11:00:24 · 35 次点击 · 0 条评论LLM 沙盒

轻松安全地执行 LLM 生成的代码

LLM 沙盒 是一个轻量级、可移植的沙盒环境,旨在以安全、隔离的模式运行大语言模型(LLM)生成的代码。它为 AI 生成的代码提供了一个安全的执行环境,同时提供了灵活的容器后端和全面的语言支持,简化了运行 LLM 生成代码的过程。

文档: https://vndee.github.io/llm-sandbox/

✨ 新功能: 本项目现已支持 模型上下文协议(MCP) 服务器,允许您的 MCP 客户端(例如 Claude Desktop)在安全的沙盒环境中运行 LLM 生成的代码。

🚀 核心特性

🛡️ 安全第一

- 隔离执行:代码在隔离的容器中运行,无法访问主机系统

- 安全策略:定义自定义安全策略以控制代码执行

- 资源限制:设置 CPU、内存和执行时间限制

- 网络隔离:控制沙盒代码的网络访问

🏗️ 灵活的容器后端

- Docker:最流行且广泛支持的选项

- Kubernetes:用于可扩展部署的企业级编排

- Podman:无根容器,增强安全性

🌐 多语言支持

通过自动依赖管理执行多种编程语言的代码:

- Python - 完整的生态系统支持,包含 pip 包

- JavaScript/Node.js - npm 包安装

- Java - Maven 和 Gradle 依赖管理

- C++ - 编译与执行

- Go - 模块支持与编译

- R - 使用 CRAN 包进行统计计算和数据分析

🔌 LLM 框架集成

与流行的 LLM 框架(如 LangChain、LangGraph、LlamaIndex、OpenAI 等)无缝集成。

📊 高级功能

- 产物提取:自动捕获图表和可视化结果

- 库管理:动态安装依赖项

- 文件操作:在沙盒环境与主机之间复制文件

- 自定义镜像:使用您自己的容器镜像

- 快速生产模式:跳过环境设置以加速容器启动

- 容器池化:预热和重用容器以提高性能(新增!)

📦 安装

基础安装

pip install llm-sandbox

安装特定后端支持

# 支持 Docker(最常见)

pip install 'llm-sandbox[docker]'

# 支持 Kubernetes

pip install 'llm-sandbox[k8s]'

# 支持 Podman

pip install 'llm-sandbox[podman]'

# 所有后端

pip install 'llm-sandbox[docker,k8s,podman]'

开发安装

git clone https://github.com/vndee/llm-sandbox.git

cd llm-sandbox

pip install -e '.[dev]'

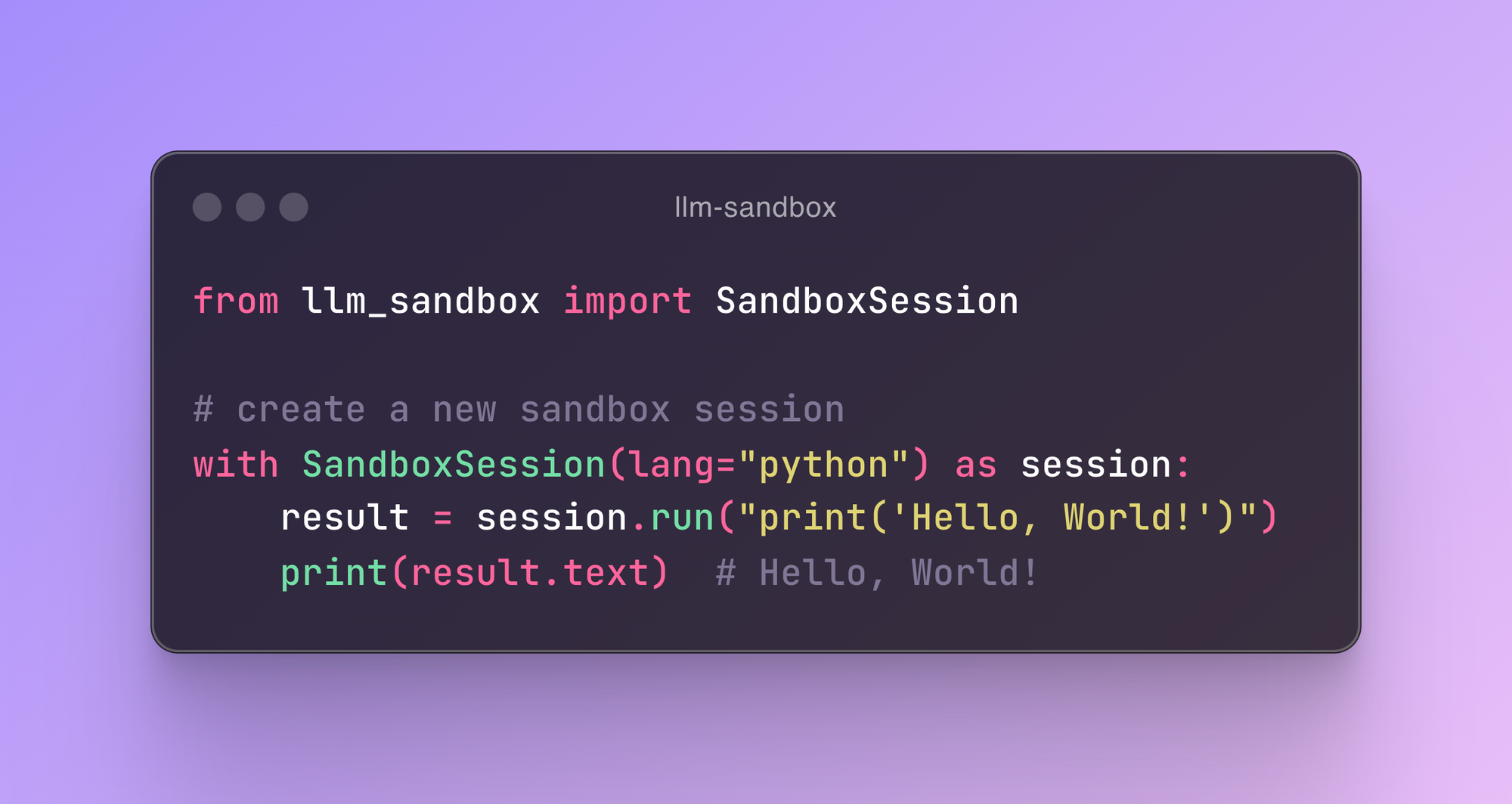

🏃♂️ 快速开始

基础用法

from llm_sandbox import SandboxSession

# 创建并使用一个沙盒会话

with SandboxSession(lang="python") as session:

result = session.run("""

print("Hello from LLM Sandbox!")

print("I'm running in a secure container.")

""")

print(result.stdout)

安装库

from llm_sandbox import SandboxSession

with SandboxSession(lang="python") as session:

result = session.run("""

import numpy as np

# 创建一个数组

arr = np.array([1, 2, 3, 4, 5])

print(f"Array: {arr}")

print(f"Mean: {np.mean(arr)}")

""", libraries=["numpy"])

print(result.stdout)

多语言支持

JavaScript

with SandboxSession(lang="javascript") as session:

result = session.run("""

const greeting = "Hello from Node.js!";

console.log(greeting);

const axios = require('axios');

console.log("Axios loaded successfully!");

""", libraries=["axios"])

Java

with SandboxSession(lang="java") as session:

result = session.run("""

public class HelloWorld {

public static void main(String[] args) {

System.out.println("Hello from Java!");

}

}

""")

C++

with SandboxSession(lang="cpp") as session:

result = session.run("""

#include <iostream>

int main() {

std::cout << "Hello from C++!" << std::endl;

return 0;

}

""")

Go

with SandboxSession(lang="go") as session:

result = session.run("""

package main

import "fmt"

func main() {

fmt.Println("Hello from Go!")

}

""")

R

with SandboxSession(

lang="r",

image="ghcr.io/vndee/sandbox-r-451-bullseye",

verbose=True,

) as session:

result = session.run(

"""

# Basic R operations

print("=== Basic R Demo ===")

# Create some data

numbers <- c(1, 2, 3, 4, 5, 10, 15, 20)

print(paste("Numbers:", paste(numbers, collapse=", ")))

# Basic statistics

print(paste("Mean:", mean(numbers)))

print(paste("Median:", median(numbers)))

print(paste("Standard Deviation:", sd(numbers)))

# Work with data frames

df <- data.frame(

name = c("Alice", "Bob", "Charlie", "Diana"),

age = c(25, 30, 35, 28),

score = c(85, 92, 78, 96)

)

print("=== Data Frame ===")

print(df)

# Calculate average score

avg_score <- mean(df$score)

print(paste("Average Score:", avg_score))

"""

)

交互式会话

对于类似笔记本的工作流,您可以使用 InteractiveSandboxSession,它能在多个 run 调用之间保持 Python 解释器状态。

from llm_sandbox import InteractiveSandboxSession

with InteractiveSandboxSession(

lang="python",

kernel_type="ipython",

history_size=200,

) as session:

session.run("value = 21 * 2")

result = session.run("print(f'Result: {value}')")

print(result.stdout) # -> Result: 42

# 使用魔法命令安装库

session.run("%pip install pandas")

result = session.run("import pandas as pd; print(pd.DataFrame({'A': [1, 2, 3], 'B': [4, 5, 6]}))")

print(result.stdout)

交互式会话支持 Docker、Podman 和 Kubernetes 后端,目前主要面向 Python 语言。它们在沙盒内启动一个长期运行的 IPython 内核,因此每个 run() 的行为都类似于一个笔记本单元格——状态、导入和魔法命令在上下文管理器退出之前一直保持活动状态,无需任何额外的网络或手动序列化。

捕获图表和可视化结果

Python 绘图

from llm_sandbox import ArtifactSandboxSession

import base64

from pathlib import Path

with ArtifactSandboxSession(lang="python") as session:

result = session.run("""

import matplotlib.pyplot as plt

import numpy as np

x = np.linspace(0, 10, 100)

y = np.sin(x)

plt.figure(figsize=(10, 6))

plt.plot(x, y)

plt.title("Sine Wave")

plt.xlabel("x")

plt.ylabel("sin(x)")

plt.grid(True)

plt.savefig("sine_wave.png", dpi=150, bbox_inches="tight")

plt.show()

""", libraries=["matplotlib", "numpy"])

# 提取生成的图表

print(f"Generated {len(result.plots)} plots")

# 将图表保存到文件

for i, plot in enumerate(result.plots):

plot_path = Path(f"plot_{i + 1}.{plot.format.value}")

with plot_path.open("wb") as f:

f.write(base64.b64decode(plot.content_base64))

R 绘图

from llm_sandbox import ArtifactSandboxSession

import base64

from pathlib import Path

with ArtifactSandboxSession(lang="r") as session:

result = session.run("""

library(ggplot2)

# Create sample data

data <- data.frame(

x = rnorm(100),

y = rnorm(100)

)

# Create ggplot2 visualization

p <- ggplot(data, aes(x = x, y = y)) +

geom_point(alpha = 0.6) +

geom_smooth(method = "lm", se = FALSE) +

labs(title = "Scatter Plot with Trend Line",

x = "X values", y = "Y values") +

theme_minimal()

print(p)

# Base R plot

hist(data$x, main = "Distribution of X",

xlab = "X values", col = "lightblue", breaks = 20)

""", libraries=["ggplot2"])

# 提取生成的图表

print(f"Generated {len(result.plots)} R plots")

# 将图表保存到文件

for i, plot in enumerate(result.plots):

plot_path = Path(f"r_plot_{i + 1}.{plot.format.value}")

with plot_path.open("wb") as f:

f.write(base64.b64decode(plot.content_base64))

🔧 配置

基础配置

from llm_sandbox import SandboxSession

# 创建一个新的沙盒会话

with SandboxSession(image="python:3.9.19-bullseye", keep_template=True, lang="python") as session:

result = session.run("print('Hello, World!')")

print(result)

# 使用自定义 Dockerfile

with SandboxSession(dockerfile="Dockerfile", keep_template=True, lang="python") as session:

result = session.run("print('Hello, World!')")

print(result)

# 或使用默认镜像

with SandboxSession(lang="python", keep_template=True) as session:

result = session.run("print('Hello, World!')")

print(result)

LLM 沙盒还支持在主机和沙盒之间复制文件:

from llm_sandbox import SandboxSession

with SandboxSession(lang="python", keep_template=True) as session:

# 从主机复制文件到沙盒

session.copy_to_runtime("test.py", "/sandbox/test.py")

# 在沙盒中运行复制的 Python 代码

result = session.execute_command("python /sandbox/test.py")

print(result)

# 从沙盒复制文件到主机

session.copy_from_runtime("/sandbox/output.txt", "output.txt")

自定义运行时配置

from llm_sandbox import SandboxSession

pod_manifest = {

"apiVersion": "v1",

"kind": "Pod",

"metadata": {

"name": "test",

"namespace": "test",

"labels": {"app": "sandbox"},

},

"spec": {

"containers": [

{

"name": "sandbox-container",

"image": "test",

"tty": True,

"volumeMounts": {

"name": "tmp",

"mountPath": "/tmp",

},

}

],

"volumes": [{"name": "tmp", "emptyDir": {"sizeLimit": "5Gi"}}],

},

}

with SandboxSession(

backend="kubernetes",

image="python:3.9.19-bullseye",

dockerfile=None,

lang="python",

keep_template=False,

verbose=False,

pod_manifest=pod_manifest,

) as session:

result = session.run("print('Hello, World!')")

print(result)

远程 Docker 主机

import docker

from llm_sandbox import SandboxSession

tls_config = docker.tls.TLSConfig(

client_cert=("path/to/cert.pem", "path/to/key.pem"),

ca_cert="path/to/ca.pem",

verify=True

)

docker_client = docker.DockerClient(base_url="tcp://<your_host>:<port>", tls=tls_config)

with SandboxSession(

client=docker_client,

image="python:3.9.19-bullseye",

keep_template=True,

lang="python",

) as session:

result = session.run("print('Hello, World!')")

print(result)

Kubernetes 支持

from kubernetes import client, config

from llm_sandbox import SandboxSession

# 使用本地 kubeconfig

config.load_kube_config()

k8s_client = client.CoreV1Api()

with SandboxSession(

client=k8s_client,

backend="kubernetes",

image="python:3.9.19-bullseye",

lang="python",

pod_manifest=pod_manifest, # 默认为 None

) as session:

result = session.run("print('Hello from Kubernetes!')")

print(result)

⚠️ 自定义 Pod 清单的重要提示:

使用自定义 Pod 清单时,请确保您的容器配置包含:

- "tty": True(保持容器存活)

- Pod 和容器级别的适当 securityContext

- 容器名称可以是任何有效名称(无限制)

完整要求请参阅 配置指南。

Podman 支持

from llm_sandbox import SandboxSession

with SandboxSession(

backend="podman",

lang="python",

image="python:3.9.19-bullseye"

) as session:

result = session.run("print('Hello from Podman!')")

print(result)

⚡ 容器池化(性能优化)

容器池化通过重用预热的容器而不是为每次执行创建新容器,显著提高了性能。这对于频繁执行代码的应用程序尤其有益。

主要优势

- 执行更快:消除容器创建开销(速度提升高达 10 倍)

- 预热环境:容器已使用您的依赖项初始化

- 线程安全:安全处理并发请求

- 资源高效:自动容器生命周期管理

- 灵活配置:控制池大小、超时和行为

基础池使用

from llm_sandbox import SandboxSession

from llm_sandbox.pool import PoolConfig, create_pool_manager

# 显式创建一个池管理器

pool = create_pool_manager(

backend="docker",

config=PoolConfig(

max_pool_size=10, # 最大容器数

min_pool_size=3, # 至少保持 3 个预热容器

idle_timeout=300.0, # 5 分钟后回收空闲容器

enable_prewarming=True, # 启动时创建容器

),

lang="python",

)

# 在会话中使用池

with SandboxSession(

lang="python",

pool=pool,

) as session:

result = session.run("print('Hello from pool!')")

# 会话关闭时容器自动返回到池中

# 完成后清理池

pool.close()

跨会话共享池

为了获得最高效率,可以在多个会话之间共享一个池:

```python

from llm_sandbox import SandboxSession

from llm_sandbox.pool import create_pool_manager, PoolConfig

创建一个共享的池管理器

pool = create_pool_manager(

backend="docker",

config=PoolConfig(

max_pool_size=10,

min_pool_size=3,

),

lang="python",

libraries=["numpy", "pandas"], # 在所有容器中预安装库

)

在多个会话中使用池

with SandboxSession(lang="python", pool=pool) as session1:

result1 = session1.run("import pandas; print(pandas.version)")

with SandboxSession(lang="python", pool=pool) as session2:

result2 = session2.run("import numpy; print(numpy.version)")